Sunday, December 27, 2009

Science, engineering and technology

The distinction between science, engineering and technology is not always clear. Science is the reasoned investigation or study of phenomena, aimed at discovering enduring principles among elements of the phenomenal world by employing formal techniques such as the scientific method. Technologies are not usually exclusively products of science, because they have to satisfy requirements such as utility, usability and safety.

Engineering is the goal-oriented of designing and making tools and systems to exploit natural phenomena for practical human means, often (but not always) using results and techniques from science. The development of technology may draw upon many fields of knowledge, including scientific, engineering, mathematical,linguistic and historical knowledge, to achieve some practical result.

Technology is often a consequence of science and engineering — although technology as a human activity precedes the two fields. For example, science might study the flow of electrons in electrical conductors , by using already-existing tools and knowledge. This new-found knowledge may then be used by engineers to create new tools and machines, such as semiconductors, computers, and other forms of advanced technology. In this sense, scientists and engineers may both be considered technologists; the three fields are often considered as one for the purposes of research and reference.

Science:

- A body of knowledge

- Seeks to describe and understand the natural world and its physical properties

- Scientific knowledge can be used to make predictions

- Science uses a process--the scientific method--to generate knowledge

Engineering:

- Design under constraint

- Seeks solutions for societal problems and needs

- Aims to produce the best solution given resources and constraints

- Engineering uses a process--the engineering design process--to produce solutions and technologies

Technology:

- The body of knowledge, processes, and artifacts that result from engineering

- Almost everything made by humans to solve a need is a technology

- Examples of technology include pencils, shoes, cell phones, and processes to treat water

In the real world, these disciplines are closely connected. Scientists often use technologies created by engineers to conduct their research. In turn, engineers often use knowledge developed by scientists to inform the design of the technologies they create.

Technology

Technology deals with human as well as other animal species' usage and knowledge of tools crafts, and how it affects a species' ability to control and adapt to its natural environment. The word technology comes from the Greek technología — téchnē , 'craft' and the study of something, or the branch of knowledge of a discipline. A strict definition is elusive; technology can be material objects of use to humanity, such as machine, but can also encompass broader themes, including systems, methods of organization, and techniques. The term can either be applied generally or to specific areas: examples include "construction technology", "medical technology", or "state of the art technology".

The human species' use of technology began with the conversion of natural resources into simple tools. The prehistorical discovery of the ability to control fire increased the available sources of food and the invention of the wheel helped humans in travelling in and controlling their environment. Recent technological developments, including the printing press, the telephone, and the Internet, have lessened physical barriers to communication and allowed humans to interact freely on a global scale. However, not all technology has been used for peaceful purposes; the development of weapons ever-increasing destructive power has progressed throughout history, from clubs to nuclear weapons.

Technology has affected society and its surroundings in a number of ways. In many societies, technology has helped develop more advanced economies (including today's global economies) and has allowed the rise of a leisure class. Many technological processes produce unwanted by-products, known as pollution, and deplete natural resources, to the detriment of the Earth and its Environment. Various implementations of technology influence the values of a society and new technology often raises new ethical questions. Examples include the rise of the notion of efficiency in terms of human productivity, a term originally applied only to machines, and the challenge of traditional norms.

Tuesday, December 15, 2009

DIFFERENT SOURCE OF SOLAR ENERGY

While I have wandered from the main subject of the sun, to consider the source of stellar energy, the two topics are so intimately related that their solutions are identical. I consider that I have demonstrated the reasonableness of Jeans's theory by the manner in which it seems to fit the observed facts. There is, as I can see, no important objection to the hypothesis. It is too much to hope that the foregoing analysis is rigidly complete, but I confidently believe that the main points are established and that further modification will consist in the clearing up of details. The application of astrophysics and atomic theory to a new field appears to have met with considerable success. In spite of this success, however, caution is necessary. The present position of the theory advocated in this paper is somewhat analogous to the place once held by the theory of Helmholtz—i.e., it is the only one sufficiently elastic to stretch over the region of known facts. Our knowledge is yet limited and, with our vision thus impaired, we can not predict the future. Some unforeseen event may upset our present hypothesis as completely as that of Helmholtz; we have built as securely as possible upon observation, and it remains for the future to test the accuracy of this or any other theory so established.

In an attempt to discover a reasonable explanation of the origin and duration of the solar radiation, all possible sources of energy are examined. The following hypotheses are reviewed and discarded, the arguments against their validity being too well known to necessitate a review at this place;

(1) Original Heat;

(2) Chemical;

(3) Gravitational,

(a) Meteoric, (b) Contraction;

(4) Radioactive.

THE SOURCE OF SOLAR ENERGY

It is the massive gravity of the Sun that compresses the core to such a high pressure and resultant high temperature, which then is sufficient to ignite the fusion reactions which take place. The overall result is to convert 4 Hydrogen atoms into one Helium atom (see below). For every 1 kilogram of hydrogen that is consumed, most is turned into Helium but a small portion, 0.007 kg, is turned into pure energy. Using the famous energy-mass equivalence formula (E = m c2) developed by Einstein, we can calculate that this mass amounts to a little over 600 trillion Joules (6 x 1014 J). When related to the total energy output of the Sun, this means that the solar fusion reactions are consuming mass at almost 5 million tons per second!

There are two distinct reactions in which 4 H atoms may eventually result in one He atom. The first of these is:

(1) 1H + 1H → 2D + e+ + ν

then 2D + 1H → 3He + γ

then 3He + 3He → 4He + 1H + 1H

This reaction sequence is believed to be the most important one in the solar core. The total energy released by these reactions in turning 4 Hydrogen atoms into 1 Helium atom is 26.7 MeV.

The second reaction generate less than 10% of the total solar energy. This involves carbon atoms which are not consumed in the overall process. The details of this "carbon cycle" are as follows:

(2) 12C + 1H → 13N + γ then 13N → 13C + e+ + ν then 13C + 1H → 14N + γ

then 14N + 1H → 15O + γ then 15O → 15N + e+ + ν

then 15N + 1H → 12C + 4He + γ

All the energy that is generated in the solar core escapes mostly in the form of very high energy gamma rays. This energy is absorbed and re-emitted many many times by the layers overlying the core, as the photons (bits of electromagnetic energy) diffuse out toward the surface. In doing so, the energy is degraded; gamma photons are turned into X-ray photons, and then into UV photons, and finally into visible light and infrared photons. And so it is light and heat that is finally radiated from the surface of the Sun into interplanetary space. This same heat and light has a flux density of 1370 watts per square metre by the time it arrives at the upper atmosphere of the Earth. It is this energy that makes life possible on the surface of the Earth, that produces our terrestrial weather, and that photovoltaic cells can convert into electrical energy. Solar energy is, in actuality, nuclear energy.

Solar water heating

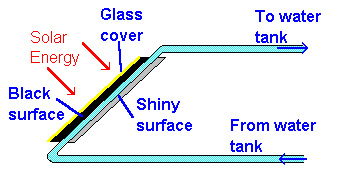

Solar water heating, where heat from the Sun is used to heat water in glass panels on your roof. This means you don't need to use so much gas or electricity to heat your water at home. Water is pumped through pipes in the panel. The pipes are painted black, so they get hotter when the Sun shines on them. The water is pumped in at the bottom so that convection helps the flow of hot water out of the top. |  |

Solar water heating is easily worthwhile in places like California and Australia, where you get lots of sunshine. |

Water heating

Solar hot water systems use sunlight to heat water. In low geographical latitudes (below 40 degrees) from 60 to 70% of the domestic hot water use with temperatures up to 60 °C can be provided by solar heating systems. The most common types of solar water heaters are evacuated tube collectors (44%) and glazed flat plate collectors (34%) generally used for domestic hot water; and unglazed plastic collectors (21%) used mainly to heat swimming pools.

Solar Cells

(really called "photovoltaic", "PV" or "photoelectric" cells) that convert light directly into electricity. |  |

| In a sunny climate, you can get enough power to run a 100W light bulb from just one square metre of solar panel. This was originally developed in order to provide electricity for satellites, but these days many of us own calculators powered by solar cells. |

Solar lighting

Daylighting systems collect and distribute sunlight to provide interior illumination. This passive technology directly offsets energy use by replacing artificial lighting, and indirectly offsets non-solar energy use by reducing the need for air-conditioning. Although difficult to quantify, the use of natural lighting also offers physiological and psychological benefits compared to artificial lighting Daylighting design implies careful selection of window types, sizes and orientation; exterior shading devices may be considered as well. Individual features include sawtooth roofs, clerestory windows, light shelves, skylights and light tubes. They may be incorporated into existing structures, but are most effective when integrated into a solar design package that accounts for factors such as glare, heat flux and time-of-use. When daylighting features are properly implemented they can reduce lighting-related energy requirements by 25%.

Hybrid solar lighting is an active solar method of providing interior illumination. HSL systems collect sunlight using focusing mirrors that track the Sun and use optical fibers to transmit it inside the building to supplement conventional lighting. In single-story applications these systems are able to transmit 50% of the direct sunlight received.

Solar lights that charge during the day and light up at dusk are a common sight along walkways.

Solar energy

Solar energy, radiant light and heat from the Sun, has been harnessed by humans since ancient times using a range of ever-evolving technologies. Solar radiation, along with secondary solar-powered resources such as wind and wave power, hydroelectricity and biomass, account for most of the available renewable energy on Earth. Only a minuscule fraction of the available solar energy is used.

Solar powered electrical generation relies on heat engines and photovoltaics. Solar energy's uses are limited only by human ingenuity. A partial list of solar applications includes space heating and cooling through solar architecture, potable water via distillation and disinfection, daylighting, solar hot water, solar cooking, and high temperature process heat for industrial purposes.To harvest the solar energy, the most common way is to use solar panels

Solar technologies are broadly characterized as either passive solar or active solar depending on the way they capture, convert and distribute solar energy. Active solar techniques include the use of photovoltaic panels and solar thermal collectors to harness the energy. Passive solar techniques include orienting a building to the Sun, selecting materials with favorable thermal mass or light dispersing properties, and designing spaces that naturally circulate air.

Monday, December 14, 2009

THE LIFTER-CRAFT PROJECT

Energy development

Energy development is the effort to provide sufficient primary energy sources and secondary energy forms to fulfill supply, cost, impact on air pollution and water pollution, and whether or not the source is renewable.

Technologically advanced societies have become increasingly dependent on external energy sources for transportation, the production of many manufactured goods, and the delivery of energy services. This energy allows people who can afford the cost to live under otherwise unfavorable climatic conditions through the use of heating, ventilation, and/or air conditioning. Level of use of external energy sources differs across societies, as do the climate, convenience, levels of traffic congestion, pollution, geothermal energy, all terrestrial energy sources are from current solar insolation or from fossil remains of plant and animal life that relied directly and indirectly upon sunlight, respectively. And ultimately, solar energy itself is the result of the Sun's nuclear fusion. Geothermal power from hot, hardened rock above the magma of the Earth's core is the result of the decay of radioactive materials present beneath the Earth's crust.

Computer

A computer is a machine that manipulates data according to a set of instructions.

Although mechanical examples of computers have existed through much of recorded human history, the first electronic computers were developed in the mid-20th century (1940–1945). These were the size of a large room, consuming as much power as several hundred modern personal computers (PCs). computers based on integrated circuits are millions to billions of times more capable than the early machines, and occupy a fraction of the space. computers are small enough to fit into a wristwatch, and can be powered by a watch battery. Personal computers in their various forms are icons of the Information Age and are what most people think of as "computers". The embedded computers found in many devices from MP3 players to fighter aircraft and from toys to industrial robots are however the most numerous.

The ability to store and execute lists of instructions called programs makes computers extremely versatile, distinguishing them from calculators. The Church–Turing thesis is a mathematical statement of this versatility: any computer with a certain minimum capability is, in principle, capable of performing the same tasks that any other computer can perform. Therefore computers ranging from a mobile phone to a supercomputer are all able to perform the same computational tasks, given enough time and storage capacityMicro Processor

Friday, November 27, 2009

CIO - Information Technology Publication:

CIO is a publication that targets executives in Information Technology. CIO stands for Chief Information Officer and is a high level strategy role in many larger organizations. CIO is a very popular publication, very well respected amongst senior technology staff. A lot of the topics covered at this level deal with trade offs between business objectives and technology. They often feature career development stories, such as how to network effectively with your peers and information about senior level career paths other than CIO.

- Audience:

Senior level information technology executives, or others that appreciate a higher-level view of the industry, one that is less hands-on, more strategic. - Content:

CIO is mostly high-level current news. There is a lot of senior level information about the direction of the industry and how to manage the trade offs between business needs and technology.

Information Technology

A Definition:

We use the term information technology or IT to refer to an entire industry. In actuality, information technology is the use of computers and software to manage information. In some companies, this is referred to as Management Information Services (or MIS) or simply as Information Services (or IS). The information technology department of a large company would be responsible for storing information, protecting information, processing the information, transmitting the information as necessary, and later retrieving information as necessary.

History of Information Technology:

In relative terms, it wasn't long ago that the Information Technology department might have consisted of a single Computer Operator, who might be storing data on magnetic tape, and then putting it in a box down in the basement somewhere. The history of information technology is fascinating! Check out these resources for information on everything from the history of IT to electronics inventions and even the top 10 IT bugs.

Modern Information Technology Departments:

In order to perform the complex functions required of information technology departments today, the modern Information Technology Department would use computers, servers.

Thursday, November 26, 2009

IT Governance

IT Governance, or Information Technology Governance, is a subset of Corporate Governance focused on information technology (IT) systems performance and risk management. There is a continual interest in IT governance as a result of compliance initiatives and the knowledge that IT projects can easily get out of control and have a serious effect on the performance of a company.

A characteristic theme of IT governance discussions is that IT can no longer operate in a “black box.” Traditionally, board-level executives stayed out of the IT decision making process. IT governance implies a system in which all stakeholders, including the board, have input into the information technology decision making process. This prevents IT from independently making decisions that can affect the outcome of the entire organization.

A popular new certification program has been implemented by the It's called Certified in the Governance of Enterprise Information Technology or certification is an advanced certification created in 2007. It is designed for experienced professionals serving in a management or advisory role focused on the governance and control of IT at an enterprise level.

Information Security

Information security refers to protecting information and information systems from unauthorized access, use, disclosure, disruption, modification, or destruction. The goals of information security include protecting the confidentiality, integrity and availability of information.

All organizations, including governments, military, financial institutions, hospitals, and private businesses, gather and store a great deal of confidential information about their employees, customers, products, research, and financial operations. Most of this information is collected, processed and stored on electronically and transmitted across networks to other computers. Protecting confidential information is a business requirement, and in many cases also an ethical and legal requirement. For the individual, information security has a significant effect on privacy and identity theft.

The field of information security has grown significantly in recent years. There are many areas for specialization including Information Systems Auditing, Business Continuity Planning and Digital Forensics Science, for example. There are also specific information security technical certifications that can assist getting started in this field.

Monday, November 16, 2009

nformation Technology - Definition and History

Information Technology – A Definition:

We use the term information technology or IT to refer to an entire industry. In actuality, information technology is the use of computers and software to manage information. In some companies, this is referred to as Management Information Services (or MIS) or simply as Information Services (or IS). The information technology department of a large company would be responsible for storing information, protecting information, processing the information, transmitting the information as necessary, and later retrieving information as necessary.

History of Information Technology:

In relative terms, it wasn't long ago that the Information Technology department might have consisted of a single Computer Operator, who might be storing data on magnetic tape, and then putting it in a box down in the basement somewhere. The history of information technology is fascinating! Check out these history of information technology resources for information on everything from the history of IT to electronics inventions and even the top 10 IT bugs.

Modern Information Technology Departments:

In order to perform the complex functions required of information technology departments today, the modern Information Technology Department would use computers, servers, database management systems, and cryptography. The department would be made up of several System Administrators, Database Administrators and at least one Information Technology Manager. The group usually reports to the Chief Information Officer (CIO).

Popular Information Technology Skills:

Some of the most popular information technology skills at the moment are:

Thursday, November 12, 2009

LANforge ICE WAN/Network Emulato

LANforge-ICE, WAN Simulator

- Reduces lab and training costs by replacing expensive WAN hardware, such as T1 and FrameRelay devices.

- Automates testing with various scripting features and libraries.

- Compact form factor and rack-mount chassis conserves valuable lab space.

- Netbook, Laptop or network appliance form factor makes LANforge-ICE a friendly traveling companion, and a good option for trade shows, customer demos and shared work spaces.

- Validates stability and functionality of devices and programs functioning across a wide variety of network conditions.

- Very affordable, especially when compared to competitors.

- Delivers advanced, remote, cross-platform, graphical management interface.

- Implements a modular architecture that allows you to leverage your existing LANforge investment as your need for capacity increases.

- Turn key solution. The LANforge systems come pre-installed and ready to run.

- Ease of use - central management of entire LANforge system from anywhere on the network.

LANforge-ICE: Feature Highlights

- General purpose WAN and Network impairment emulator.

- Able to simulate DS1, DS3, OC-3, OC-12, OC-24, OC-48, GigE, DSL, CableModem, Satellite links and other rate-limited networks, from 10bps up to 2.4 Gbps speeds (full duplex).

- Can modify various network attributes including: network-speed, latency, jitter, packet-loss, packet-reordering, and packet-duplication.

- Supports Packet corruptions, including bit-flips, bit-transposes and byte-overwrites.

- Supports WanPath feature to allow configuration of specific behaviour between different IP subnets or MAC addresses using a single pair of physical interfaces.

- Able to impair packets based on an arbitary filter that is created using the popular and the well documented tcpdump filter syntax.

- Supports WAN emulation across virtual 802.1Q VLAN interfaces for more efficient use of valuable physical network interfaces.

- Ethernet hardware bypass option allows LANforge to be deployed in networks with high availability requirements.

- Supports routed and bridged mode for more flexibility in how your configure your network and LANforge-ICE. Virtual routers can be configured with the Netsmith tool. Supported routing protocols include: IPv4 static routing, IPv6 static routing, IPv4 OSPF, IPv6 OSPF, IPv4 Multicast routing (IGMP) and BGP. LANforge-ICE on Windows and Solaris supports only bridged mode currently.

- Supports 'WAN-Playback' allowing one to capture the characteristics of a live WAN and later have LANforge-ICE emulate those captured characteristics. The playback file is in XML format, and can be easily created by hand or with scripts. The LANforge-ICEcap tool can be used to probe networks and automatically create the XML playback file.

- Available configurations include all-in-one Netbooks, Laptops, silent appliances and rackmount systems for demo, desktop, benchtop and lab environments.

- Allows packet sniffing and network protocol decoding with the integrated Wireshark protocol sniffer.

- Comprehensive management information detailing all aspects of the LANforge system including processor card statistics, test cases, and ethernet port statistics.

- GUI runs as Java application on Linux, Solaris and Microsoft Operating Systems (among others).

- GUI can run remotely, even over low-bandwidth links to accommodate the needs of the users.

- Central management application can manage multiple units, tests, and testers simultaneously.

- Supports scriptable command line interface (telnet) which can be used to automate test scenarios. Perl libraries and example scripts are also provided!

- Automatic discovery of LANforge processor cards simplifies maintenance of LANforge test equipment.

- LANforge systems come pre-installed and configured with customer supplied network information.

- LANforge-FIRE feature set may be combined with LANforge-ICE for more realistic testing.

LANforge Netsmith: Virtual Network Builder

Netsmith is a drag-and-drop virtual network builder. It can support virtual routers, emulated network links, bridges (switches), virtual and physical interfaces, and more. When using routers, it supports static routing for IPv4 and IPv6, OSPF routing for IPv4 and IPv6 and IPv4 multicast routing protocols. LANforge-FIRE stateful traffic generating connections and LANforge-ICE network emulations are easily placed in the virtual networks. The virtual routers can connect to external OSPF and multicast routers and static subnet routing for easy integration into your network.- Emulates networks of arbitrary complexity using real-world routing protocols by integrating with the XORP router daemon.

- Supports IPv4 and IPv6 static routing.

- Supports IPv4 and IPv6 OSPF routing.

- Supports IPv4 multicast routing.

- Supports ethernet bridges, including spanning tree protocol (STP).

- The virtual interfaces are 'real', so you can configure them like normal network interfaces and use sniffers and other tools on the individual interfaces.

- Virtual router interconnections can be associated with LANforge-ICE network emulations.

- Interfaces can be associated with LANforge-FIRE stateful traffic generation connections.

- See the LANforge-FIRE and LANforge-ICE and cookbook for examples of how Netsmith works.

LANforge ICEcap Network Probe Feature Highlights

- The LANforge-ICEcap tool can probe a network and save the probed latency, packet loss and other values to an XML file that can be replayed by the LANforge-ICE WAN emulator. This allows for realistic WAN emulations based on real-world networks.

- LANforge-ICEcap currently supports Linux and Windows.

LANforge FIRE Stateful Network Traffic Generator

LANforge-FIRE Stateful Network Traffic Generator

- Validates stability and data throughput on devices under evaluation.

- Useful for testing any network, and especially cost effective for efforts requiring many data-generating ports, such as DSL, Cable-Modem, and Satellite modems.

- Turn key solution. The LANforge systems come pre-installed and and ready to run.

- Implements a modular architecture that allows you to leverage your existing LANforge investment as your need for capacity increases.

- Ease of use - Manage entire LANforge installation through one interface, from anywhere on the network.

LANforge VoIP/RTP Call Generator Feature Highlights

- SIP and/or H.323 protocol used for call management.

- SIP and H.323 are now supported on both Windows and Linux.

- SIP/UDP supported, H.323 uses both UDP and TCP.

- Can use directed mode, where VoIP phones call directly to themselves.

- Can also use Gateway mode where the VoIP phones register with a SIP or H.323 gateway.

- SIP authentication is supported.

- RTP protocol used for streaming media transport, and supports the following CODECS. More codecs may be supported in the future.

- G.711u: 64kbps data stream, 50 packets per second (SIP, H.323)

- G.729a: 8kbps data stream, 50 packets per second (SIP ONLY)

- Speex: 16kbps data stream, 50 packets per second (SIP on Linux ONLY)

- G.726-16: 16kbps data stream, 50 packets per second (SIP ONLY)

- G.726-24: 24kbps data stream, 50 packets per second (SIP ONLY)

- G.726-32: 32kbps data stream, 50 packets per second (SIP ONLY)

- G.726-40: 40kbps data stream, 50 packets per second (SIP ONLY)

- NONE: A messaging-only configuration is now supported (SIP ONLY)

- Supports PESQ automated voice quality testing.

- RTCP protocol used for streaming media statistics (SIP only)

- Each LANforge VoIP/RTP endpoint can play from a wav file and record to a separate wav file. Almost any sound file can be converted to the correct wav file format with tools bundled with LANforge. Sample voice files are included.

- Current benchmarks show support for 140 or more emulated VoIP phones per machine.

- LANforge VoIP/RTP endpoints can call other LANforge endpoints or third party SIP or H.323 phones like Cisco and Grandstream. Third party phones can also call LANforge endpoints and hear the WAV file being played.

LANforge FIRE & Armageddon: Feature Highlights

- Supports real-world protocols: (Benchmarked on high-end Candela Technologies-supplied hardware, typically equivalent to the LF1002 server.)

- Layer 2: Raw-Ethernet (225 Mbps+ bi-directional on GigE)

- PPP: Supports PPP and multi-link PPP over T1/E1 interfaces at full line speed

- Layer 3: Armageddon accellerated UDP/IP (9.99 Gbps+ with 1514 byte frames on 10 GE; 990 Mbps, 81,800 pps on GigE; both symmetrical and bidirectional, sending to self (2 ports))

- Layer 3: UDP/IP (990 Mbps+ bi-directional with 64K byte PDUs (1500 byte MTU) on GigE)

- Layer 3: UDP/IPv6 (990 Mbps+ bi-directional with 64K byte PDUs (1500 byte MTU) on GigE)

- Layer 3: IGMP Multicast UDP (500+ receivers)

- Layer 3: IGMP Multicast UDP over IPv6 (500+ receivers)

- Layer 3: Stateful TCP/IP (980 Mbps+ bi-directional with 64K byte writes (1500 byte MTU) on GigE)

- Layer 3: Stateful TCP/IPv6 (980 Mbps+ bi-directional with 64K byte writes (1500 byte MTU)on GigE)

- Layer 4: FTP (200 Mbps+, bi-directional, per processor)

- Layer 4: HTTP (4 Gbps+ download, 65,000+/13,000+ Requests per Second, 3,000+ concurrent connections)

- Layer 4: HTTPS (990Mbps+ download)

- Layer 4: TELNET (not benchmarked, via integrated script)

- Layer 4: PING (not benchmarked, via integrated script)

- Layer 4: DNS (not benchmarked, via integrated script)

- Layer 4: SMTP (not benchmarked, via integrated script)

- Layer 4: VoIP Call Generator (SIP, RTP, RTCP, PESQ/MOS), 250+ calls per machine

- Layer 4: Streaming audio and video with flexible plugin architecture.

- Supports over 2000 connections on a single machine.

- Supports real-world compliance with ARP protocol.

- Supports ToS (QoS) settings for TCP/IP and UDP/IP connections.

- Uses publicly available Linux, Windows and Solaris networking stack for increased standards compliance.

- Utilizes libcurl for FTP, HTTP and HTTPS (SSL) protocols.

- Supports file system test endpoints (can be used for NFS, SMB, and iSCSI file systems too!). Can emulate 1000+ CIFS and/or NFS clients with unique mount points, IPs, MACs, etc

- Supports custom and command-line programs, like telnet, SMTP, and ping.

- Comprehensive traffic reports include: Packet Transmit rate, Packet Receive rate, Packet Receive Drop %, Transmit Bytes, Receive Bytes, Latency, various ethernet driver level counters, and much more.

- Supports generation of reports that are ready to be imported into your favorite spread-sheet.

- Allows packet sniffing and network protocol decoding with the integrated Wireshark protocol sniffer.

- GUI runs as Java application on Linux, Solaris and Microsoft Operating Systems (among others).

- GUI can run remotely, even over low-bandwidth links to accommodate the needs of the users.

- Central management application can manage multiple units, tests, and testers simultaneously.

- Supports scriptable command line interface (telnet) which can be used to automate test scenarios. Perl libraries and example scripts are also provided!

- Comprehensive management information detailing all aspects of the LANforge system including processor card statistics, test cases, and ethernet port statistics.

- Supports 20 or more physical data-generating ethernet ports per 2U LANforge chassis.

- Emulates over 2000 unique machines with one physical interface with the MAC-VLAN feature!

- Supports over 2000 802.1Q VLANs.

- Supports PPP-over-T1/E1 and PPPoE, including automated creation and deletion of the PPP interfaces

- Supports 802.11a/b/g with WiFIRE feature set (see below.)

- Automatic discovery of LANforge data generators simplifies configuration of LANforge test equipment.

- LANforge stateful traffic generation and management software supported on Red Hat Linux, Microsoft Windows and Solaris.

- Custom packet builder interface allows hand crafting of headers and payloads. Headers supported at Layer 2 include ARP, SNAP/LLC, 802.1Q, 802.1QinQ and MPLS. Some Layer 3 protocol headers supported include IP, IPX, UDP, TCP, ICMP, IGMP, IP-ENCAP, RDP, IPinIP and IPv6 protocols.

LANforge WiFIRE 802.11a/b/g Stateful Traffic Generator

- Useful for testing Wireless Access Points and deployments.

- Can emulate up to 126 802.11a/b/g wireless client stations (Virtual STAs) per radio.

- Each Virtual STA can be associated with a particular Access Point (AP).

- Each Virtual STA has unique MAC address, IP address and routing table.

- 128bit WEP, WPA2 and related wpa_supplicant authentication methods supported.

- Supports all LANforge FIRE stateful traffic generation features, including HTTP, TCP, UDP, VOIP (SIP, RTP) and more.

LANforge NetReplay & Backtrack Feature Highlights

- Using a combination of the LANforge-FIRE traffic generation and LANforge-ICE network emulation, LANforge supports capture and replay of ethernet packet streams.

- Capture protocol can be converted to standard 'libpcap' format for use with other tools such as Ethereal and tcpdump.

- Capture has been benchmarked at 1Gbps bi-directional on high-end hardware using 6TB RAID configuration.

WAN Information - Wide Area Networks

Tuesday, November 10, 2009

LAN Information - Local Area Networks

Also Known As: Local Area Network

Monday, November 9, 2009

Communications-enabled application

Communication enablement adds real-time networking functionality to an IT application. Providing communications capability to an IT application:

removes the human latency which exists when (i) making sense of information from many different sources, (ii) orchestrating suitable responses to events, and (iii) keeping track of actions carried out when responding to information received;

enables users to be part of the creative flow of content and processes.

What distinguishes a CEA from other software applications is its intrinsic reliance upon communications technologies to accomplish its objectives. A CEA depends on real-time networking capabilities together with such network oriented functions as location, presence, proximity, and identity.

Another distinguishing characteristic of a CEA is the implicit assumption that network services will be available as callable services within the SOA frameworks from which the CEA is constructed. To provide callable services, the network services which are available today must be made virtual and component-like.

CEAs apply to business processes as well as instances where no obvious business process which requires improvement exists (e.g., games, entertainment video). CEAs that apply to business processes are referred to as communications enabled business processes or communications enabled business solutions.

[edit] Importance

CEA are important for at least four reasons:

The convergence of (i) CEA, (ii) broadband and (iii) millions of different devices connected to the network is expected to significantly affect the communications industry.

CEA introduce a fundamental change in the way that information communications technology (ICT) applications and services are designed, developed, delivered, and used. To date, SOA has focused on building IT applications only and the network has been mostly deemed to be a transport pipe. CEA incorporate communications capability into any application. This requires that network services must be made virtual and component-like as well as callable within a SOA framework. CEA implementation entails a significant reorganization of present network management functionality.

CEA bring together the richness of IT applications with the sophistication and intelligence of communications networks. This enables greater customization, greater simplification of interactions, and automatic adaptation to users' environments and preferences.

Making network components from multiple vendors work in a mashup will be a major challenge. The service level agreements (SLAs) for these mashups will be difficult to define and deliver upon.

Examples

A tech savvy entrepreneur can integrate highly secure services such as messaging, voice, conference call, authentication and inbound short message service into an IT application for the purpose of delivering a customized solution in hours or days at a fraction of the cost of large packaged applications or custom development projects.

A patient is discharged more quickly because the patient care application used by authorizing medical personnel can reach out to the discharge application, wherever they are.

A new policy is processed and approved more quickly because the client’s insurance agent initiates real-time communications with people who have reviewed the policy and are required to approve it.

Faster and more effective emergency response is provided because the first responder application recognized the availability and location of key resources.

An industrial customer problem is resolved more quickly because the project management application scheduled the earliest possible conference call with all key available stakeholders and delivered all relevant information to them.

A data center backup package that runs overnight must be complete by 8 a.m. when the network turns back to daytime operations. The application recognizes that it will not complete in time - so it makes a request of the network for more capacity. The network can apply logic to translate the request into a set of commands to the various nodes to do whatever is required for the task to be completed by 8 a.m. (e.g., change the priority, provision more capacity, allocate more wavelengths).

Cad mouse 1

CAD is sometimes translated as "computer-assisted", "computer-aided drafting", or a similar phrase. Related acronyms are CADD, which stands for "computer-aided design and drafting", CAID for Computer-aided Industrial Design and CAAD, for "computer-aided architectural design". All these terms are essentially synonymous, but there are a few subtle differences in meaning and application.

CAD was originally the three letter acronym for "Computer Aided drafting" as in the early days CAD was really a replacement for the traditional drafting board.But now is the term is often interchanged with "Computer Aided Design" to reflect the fact that modern CAD tools do much more than just drafting.

Kt88 power tubes in traynor yba200 amplifier

ChipScaleClock2 HR

Research

ResearchChip-scale atomic clock unveiled by NIST

Most research focuses on the often conflicting goals of making the clocks smaller, cheaper, more accurate, and more reliable.

New technologies, such as femtosecond frequency combs, optical lattices and quantum information, have enabled prototypes of next generation atomic clocks. These clocks are based on optical rather than microwave transitions. A major obstacle to developing an optical clock is the difficulty of directly measuring optical frequencies. This problem has been solved with the development of self-referenced mode-locked lasers, commonly referred to as femtosecond frequency combs. Before the demonstration of the frequency comb in 2000, terahertz techniques were needed to bridge the gap between radio and optical frequencies, and the systems for doing so were cumbersome and complicated. With the refinement of the frequency comb these measurements have become much more accessible and numerous optical clock systems are now being developed around the world.

Like in the radio range, absorption spectroscopy is used to stabilize an oscillator — in this case a laser. When the optical frequency is divided down into a countable radio frequency using a femtosecond comb, the bandwidth of the phase noise is also divided by that factor. Although the bandwidth of laser phase noise is generally greater than stable microwave sources, after division it is less.

The two primary systems under consideration for use in optical frequency standards are single ions isolated in an ion trap and neutral atoms trapped in an optical lattice.[11] These two techniques allow the atoms or ions to be highly isolated from external perturbations, thus producing an extremely stable frequency reference.

Optical clocks have already achieved better stability and lower systematic uncertainty than the best microwave clocks.[11] This puts them in a position to replace the current standard for time, the caesium fountain clock.

Atomic systems under consideration include but are not limited to Al3+, Hg+/2+,[11] Hg, Sr, Sr+, In3+, Ca3+, Ca, Yb2+/3+ and Yb

Nistf1ph

Atomic clocks do not use radioactivity, but rather the precise microwave signal that electrons in atoms emit when they change energy levels. Early atomic clocks were based on masers. Currently, the most accurate atomic clocks are based on absorption spectroscopy of cold atoms in atomic fountains such as the NIST-F1.

National standards agencies maintain an accuracy of 10−9 seconds per day (approximately 1 part in 1014), and a precision set by the radio transmitter pumping the maser. The clocks maintain a continuous and stable time scale, International Atomic Time (TAI). For civil time, another time scale is disseminated, Coordinated Universal Time (UTC). UTC is derived from TAI, but synchronized, by using leap seconds, to UT1, which is based on actual rotations of the earth with respect to the solar time

Macintosh 128k transparency

Description

Macintosh 128k transparency.png

A w:Macintosh 128K (that has apparently been upgraded to 512K, see window) running Finder 4.1 Italian on transparent background. Note the add-on "Programmer's Switch" on the lower-left corner of the case, which includes reset and interrupt buttons. Based on w:Image:Macintosh 128k No Text.jpg which was edited by TDS from a version found on the Wikimedia Commons to remove text that obstructed the photograph. (Image:Macintosh 128k.jpg). It is desirable that the current image be recreated in jpg from that source. The original photograph is from [1], which also shows the back of the machine, confirming it is the original 128K model. This is an image that has been released into the GFDL. Because of the free license, it is currently the logo of WikiProject Macintosh.

Internet Kiosk VTBS